In the wake of Getty announcing that it had “commenced legal proceedings” in the High Court of Justice in London against Stability AI, Getty Images (US), Inc. has lodged what might be the most notable domestic lawsuit currently on the artificial intelligence (“AI”) front amid a larger rise in cases that center on companies’ unauthorized use of others’ works to train AI models. According to the complaint that it filed with the U.S. District Court for the District of Delaware on Feb. 3 (as first reported by Copyright Lately), Getty claims that as part of a “brazen infringement of [its] intellectual property on a staggering scale,” Stability AI has copied millions of photographs from its collection “without permission from or compensation to Getty Images, as part of its efforts to build a competing business.”

Rather than attempting to negotiate a license with Getty for the use of its content, and “even though the terms of use of [its] websites expressly prohibit unauthorized reproduction of content for commercial purposes,” Getty claims that Stability AI has “scrap[ed] links to billions of pieces of content from various websites, including Getty Images’ websites.” To date, Getty says that it has identified “over 12 million links to copyrighted images and their associated text and metadata on its websites in the datasets that were used to train Stable Diffusion.” On the back of “intellectual property owned by Getty Images and other copyright holders,” Getty argues that Stability AI created its image-generating model, which “uses artificial intelligence to deliver computer-synthesized images in response to text prompts.”

Getty contends that its images are “highly desirable” for use in training artificial intelligence programs, such as Stable Diffusion, “because of their high quality, and because they are accompanied by content-specific, detailed captions and rich metadata.”

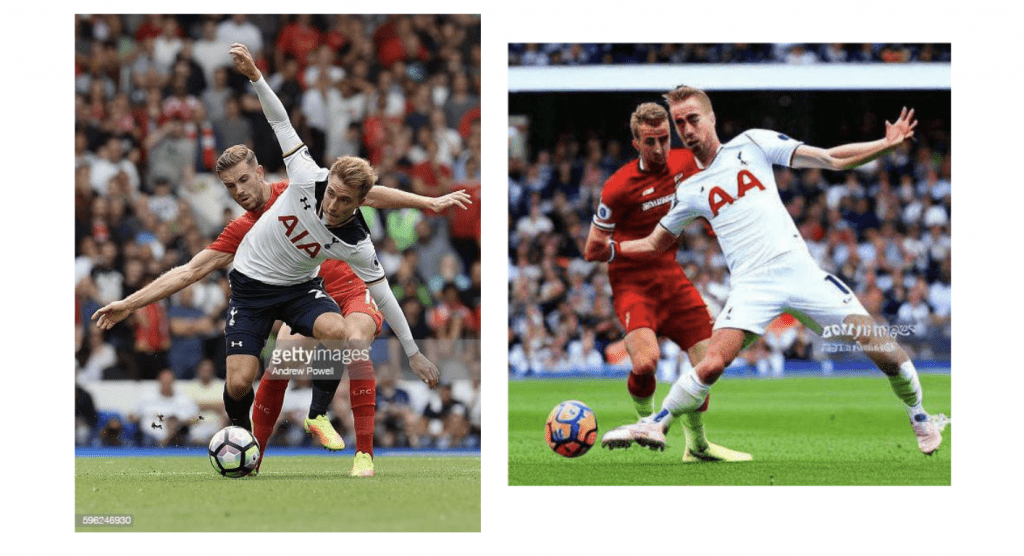

In addition to allegedly co-opting its imagery to train the Stable Diffusion model, Getty assets in its lawsuit that “Stability AI has removed or altered [the] copyright management information” from that imagery, “knowingly remov[ing] Getty Images’ watermarks from some images in the course of its copying as part of its infringing scheme.” The 3-year-old tech company has also “provided false copyright management information, and infringed [its] famous trademarks,” per Getty, which specifically states that “the Stable Diffusion model frequently generates output bearing a modified version of the Getty Images watermark.” (The fact that the output delivered by Stability AI includes a modified version of a Getty Images watermark “underscor[es] the clear link between the copyrighted images that Stability AI copied without permission and the output its model delivers,” Getty argues.)

On the trademark front: This creates “confusion as to the source of the images and falsely implying an association with Getty Images,” per Getty. Beyond causing confusion, Getty asserts that “while some of the output generated through the use of Stable Diffusion is aesthetically pleasing, other output is of much lower quality and at times ranges from the bizarre to the grotesque.” As such, Stability AI’s “incorporation of Getty Images’ marks into low quality, unappealing, or offensive images dilutes those marks in further violation of federal and state trademark laws.”

On the copyright front: Stability AI has infringed Getty’s copyrights by “reproducing [its] copyrighted works and creating derivative works therefrom without any authorization.”

On the copyright management info. front: “By applying a modified version of [its] watermarks to output generated through use of Stable Diffusion and the DreamStudio interface, Stability AI has provided false copyright management information in violation of 17 U.S.C. § 1202(a).”

Stability AI “now competes directly with Getty,” the photo agency argues, claiming that Stability AI is “marketing Stable Diffusion and its DreamStudio interface to those seeking creative imagery, and its infringement of Getty’s content on a massive scale has been instrumental to its success to date.” The “gravity of Stability AI’s brazen theft and free-riding is compounded by the fact that, by utilizing Getty Images’ copyrighted content for artificial intelligence and machine learning, Stability AI is stealing a service that Getty Images already provides to paying customers in the marketplace for that very purpose.”

With the foregoing in mind, Getty accuses Stability AI of copyright infringement, trademark infringement and dilution, unfair competition, Deceptive Trade Practices in Violation of Delaware’s Uniform Deceptive Trade Practices Act, providing false copyright management information, and removing or altering copyright management information. In addition to an award of “all damages suffered by Getty and for any profits to or gain by Stability AI attributable to” its infringement of Getty’s copyrights and trademarks, Getty is seeking injunctive relief and an order requiring Stability AI to destroy “all versions of Stable Diffusion trained using Getty’s content without permission.”

THE BIGGER PICTURE

“Silicon Valley’s attention has decidedly shifted” from crypto, blockchain and web3 to AI, according to Bloomberg, and “every company is angling for a piece.” Companies are not the only ones getting involved in the hype that is surrounding AI; creators are, too – albeit not necessarily with new ventures but by way of litigation, and the Getty lawsuit falls neatly in line with a growing number of cases that are actively challenging AI companies’ unauthorized use of assets – ranging from imagery to source code – to train AI models. (And chances are, the boom on AI is likely to prompt even more lawsuits like these.)

Getty’s lawsuit is not the first to be filed over the use of copyright-protected imagery to train AI image-generators, but it is notable, nonetheless, as it is the first (or one of the very first) to actually claim copyright infringement – as well as trademark infringement and dilution – in connection with such activities. To date, most of the lawsuits in this vein have set out (and are limited to) claims under the Digital Millennium Copyright Act (“DMCA”) as a result of the defendant(s)’ alleged removal of copyright information in connection with the underlying assets.

These uses of copyright protected assets to train AI models – such as those that power generators like DALL-E, ChatGPT, and Stable Diffusion – and the resulting creations raise interesting questions from a copyright perspective, including whether such activities amount to infringement, a question that is heavily dependent on the doctrine of fair use. While courts have not yet decided whether the use of copyright protected works for the purposes of AI fair use under U.S. copyright law, a fair use argument could be viable as a result of the outcome in Authors Guild, Inc. v. Google, Inc., in which the Second Circuit held that Google’s digitization and subsequent use of the copyrighted works for its Google Books and Library Project was fair use.

The fair use defense is being cited in the context of AI, with companies pointing to the defense in the early stages of litigation they are facing. As Microsoft and GitHub argued in response to the DMCA lawsuit waged against them in connection with AI code-generator Copilot, the plaintiffs did not claim copyright infringement (and instead, rely on copyright info. management claims) in “an attempt to evade … the progress-protective doctrine of fair use,” as any copyright infringement claim “would run headlong into the doctrine of fair use.”

In response to the same AI-centric lawsuit, in which it is also a defendant, OpenAI states that proving that “any copying was not fair use” would be “a heavy burden” for the plaintiffs in light of “the Supreme Court’s holding in the source-code context that ‘taking only what was needed to allow users to put their accrued talents to work in a new and transformative program … was a fair use of that material as a matter of law.’”

And in a final point, it is worth noting that in light of the significance of the fair use doctrine, the Supreme Court’s impending decision in Andy Warhol Foundation for the Visual Arts, Inc. v. Goldsmith will almost certainly be relevant here, with Manatt, Phelps & Phillips’ Robert Jacobs stating in a recent note that “the odds strongly favor the Supreme Court giving the fair use doctrine a refresh in its upcoming decision addressing Andy Warhol’s recasting of Lynn Goldsmith’s well-known photographic portraits of music icon Prince.” Whatever the outcome, they assert that “the decision is likely to address – and potentially curtail – the ever-increasing emphasis certain lower courts have placed on how transformative a secondary work is relative to the original.”

The case is Getty Images (US), Inc. v. Stability AI, Inc., 1:23-cv-00135 (D.Del.).